Ethics: Veil of Ignorance

"Do not imagine that it is less an accident by which you find yourself master of the wealth which you possess, than that by which this man found himself king."

This and the next post each attempt to make the leap from the relatively uncontroversial Von Neumann-Morgenstern Utility theory to the much more complicated idea that we should be adding people's utilities together.

This post makes this argument somewhat informally via a concept known as the veil of ignorance. The next post is much more rigorous, but, perhaps, less intuitively convincing.

The Veil of Ignorance

The Veil of Ignorance is a famous philosophical thought experiment that has arisen multiple times throughout the history of philosophy, but is most often associated with John Rawls Veil of ignorance Original position.

It starts as a thought experiment:

Imagine we took a group of reasonable people and threw them in a room - telling them to figure out how our society should work [sound familiar?]. However, we add a twist. Once they've decided on a set of rules to run society, they will be randomly born into it. They won't keep their race, sex, IQ, family, preferences, etc. The Veil of Ignorance is the claim that this would produce a more-or-less perfectly ethical social structure, because these people can't make decisions based on their own preferences, abilities, traits, and demographics.

Summatarianism

If you accept the veil of ignorance and you further accept that both individual and social "goodness" can (in theory) be assigned a number for each universe branch, then I claim that you must logically believe something like utilitarianism.

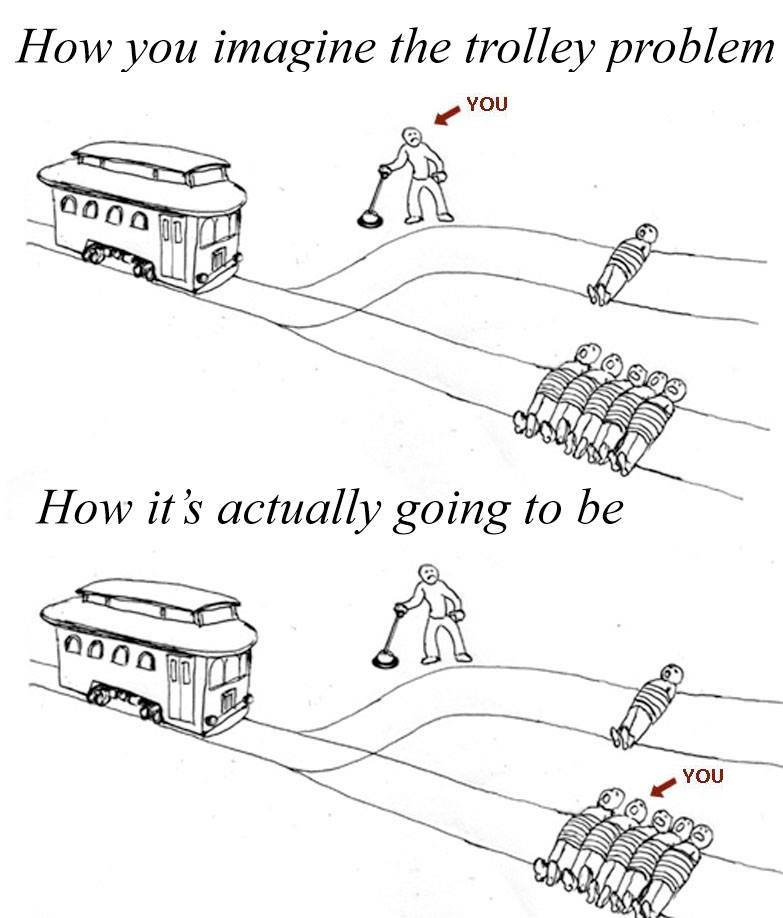

The reasoning is actually fairly simple. Imagine you live in a society with N people, and you have been selected to choose how to run it, but behind a veil of ignorance.

When you leave the veil of ignorance, you have a 1-in-N chance of becoming any given person. This means, by the definition of expected value Expected value, your expected utility after being born is simply the average of each individual person's utility.

This is nearly the textbook definition of average utilitarianism - maximizing the average well-being of every person Average and total utilitarianism. The only issue is that utilitarianism is defined as a type of consequentialism in philosophy, and I don't see how either the veil of ignorance or what I discuss in my next post implies consequentalism. Instead, it seems that philosophers and lay people alike seem to conflate the idea that you can sum individual "goodness" and the idea that this individual goodness must be consequential.

As far as I can tell, no one has invented a word to mean utilitarianism sans consequentialism, so here's the one I propose: Summatarianism. Summa means "sum" or "total" in Latin, and is pronounced "sue-ma".

To be more specific Summatarianism is the philosophical belief that ethics in a fixed population is equivalent to maximizing the sum of individual "goodness".

Rawls' Critique

Ironically, despite Rawl's advoacy for the Veil of Ignorance, he is perhaps the most famous critic of utilitarianism. The reason he gives for rejecting utilitarianism is a concept called the "separateness of persons" John Rawls (1921 - 2002). Here's an except John Rawls.:

Each person possesses an inviolability founded on justice that even the welfare of society as a whole cannot override. For this reason justice denies that the loss of freedom for some is made right by a greater good shared by others. It does not allow that the sacrifices imposed on a few are outweighed by the larger sum of advantages enjoyed by many. Therefore in a just society the liberties of equal citizenship are taken as settled; the rights secured by justice are not subject to political bargaining or to the calculus of social interests.

I believe Rawls is missing an important point: if a rational person had to choose behind the veil of ignorance whether they'd want to enter Rawls' ideal society or the utilitarian/summatarian ideal society, they'd choose the later, because it (by definition) maximizes their expected well-being.

Rawls is obviously free to reject utilitarianism if he thinks that it doesn't place enough weight on human rights/freedoms, but in doing so he must reject his very own veil-of-ignorance argument.